Example

Let’s start with the following example of an integration test:

@Test

void getRemoteRequests() throws Exception {

assertSuccessfulGetId(X_CORRELATION_ID, X_REQUEST_ID);

MvcResult mvcResult = runGetRemoteRequests(X_CORRELATION_ID, true);

assertThat(mvcResult).hasStatusCode(HttpStatus.ACCEPTED);

assertRequestResponse();

}

}

This test method is part of a microservice that communicates with a third-party system and offers various operations, including getId and getRemoteRequests, both of which are relevant in our example.

In the context of this integration test, we execute the operation getId in assertSuccessfulGetId and make sure that it was successful. What exactly getId does – and what the Id can be used for – is irrelevant in this context; the only relevant element is the call to the third-party system. The purpose of getRemoteRequest is to return all previously executed requests to the third-party system. In this test, when executing getRemoteRequest, we get back a JSON object. This object contains the getId request to the third-party system.

We now want to make sure that the return value of getRemoteRequest meets our expectations. So, we have to make sure that the request in the return really looks like the regular request sent by getId to the third-party system.

This process can be implemented in various ways, but it is usually very labour-intensive.

Validation File Assertions

This is where Validation File Assertions come into play. To be able to check the file assertions more quickly and clearly on a file-by-file basis, you convert them into text form. This allows multiple assertions to be executed in one step, which in turn minimizes the effort required to create tests – a big advantage.

In addition, the Validation File Assertion not only performs the check of the assertion itself, but also shows it directly in the correct context.

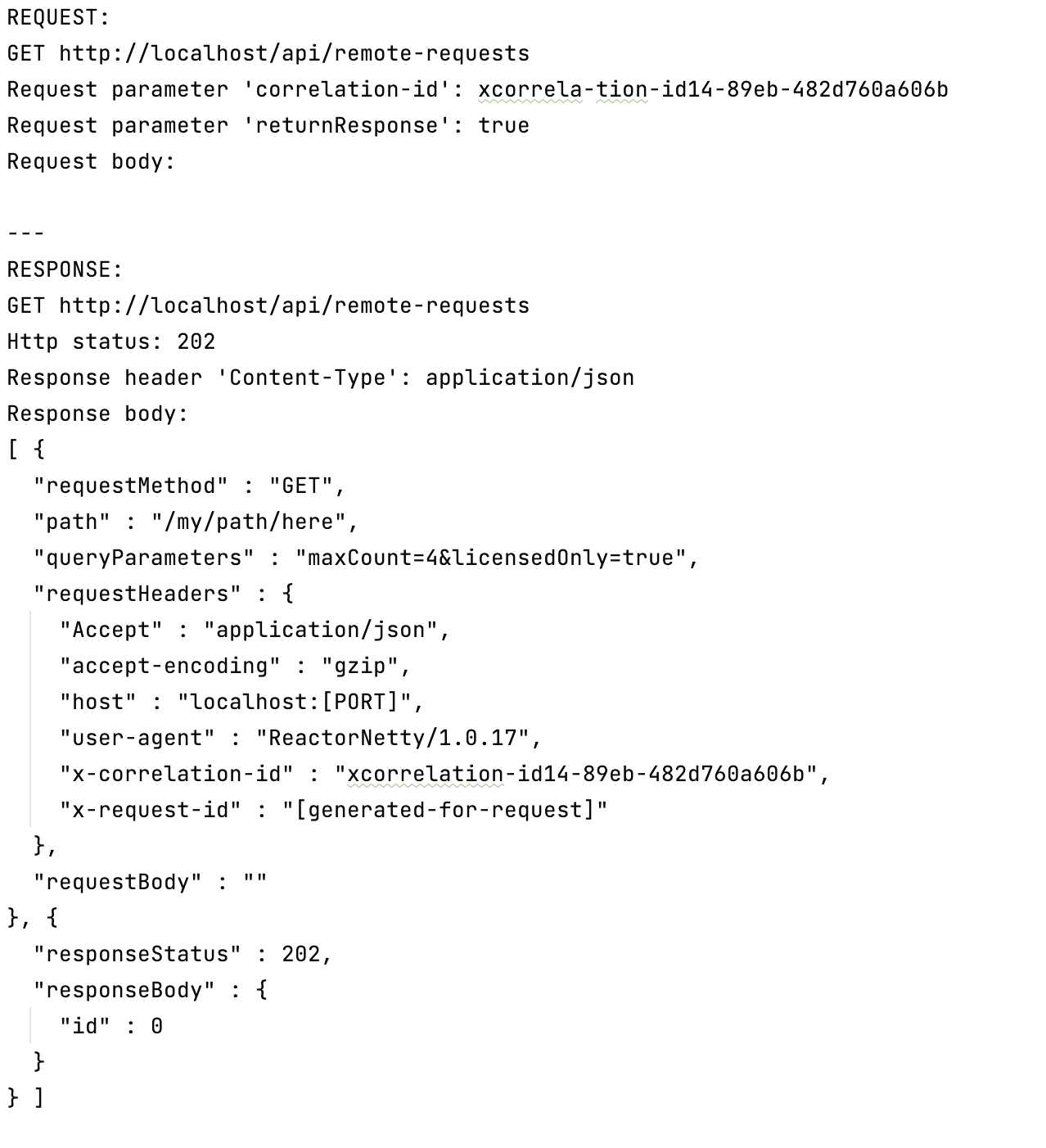

In our example, the validation file text file contains the request and response of getRemoteRequest

request of getRemoteRequest, and in the response the request of getId.

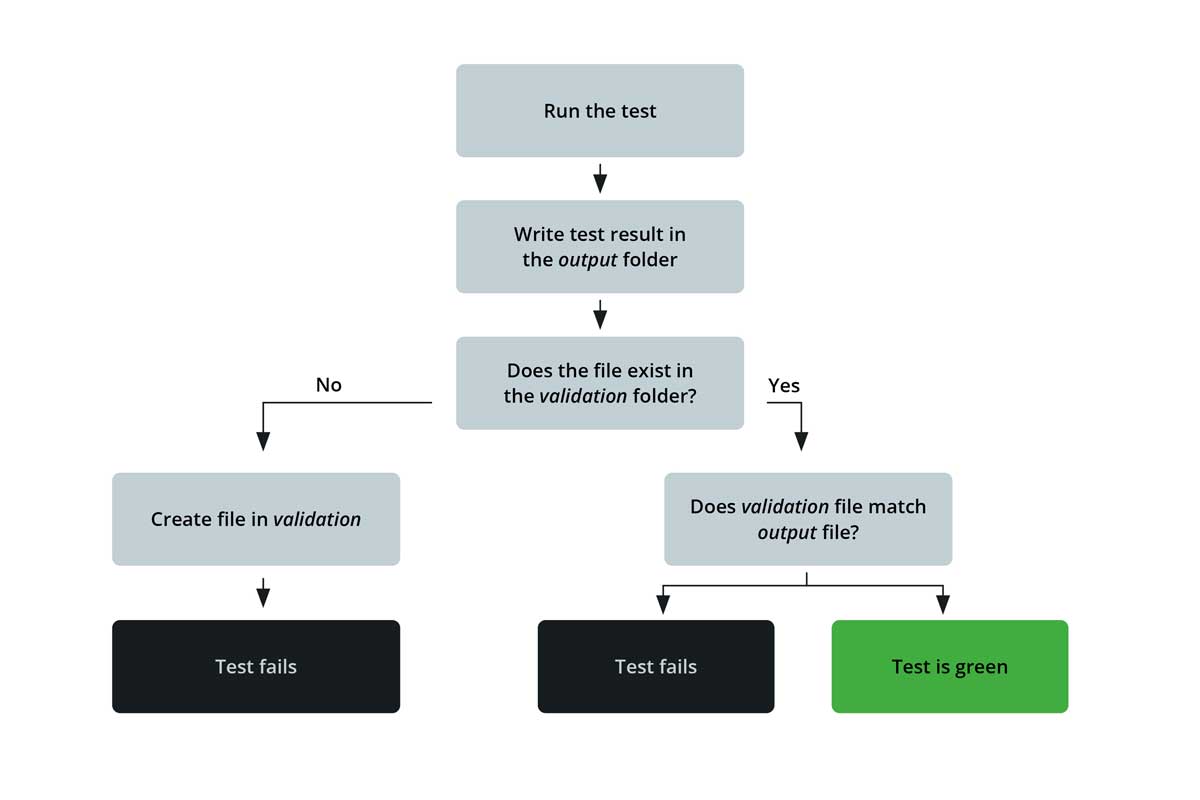

Validation File Assertions go through the following stages when executing the test:

-

The test result is first written to a text file in the “output” folder.

-

A second folder, labeled “validation”, is used to check whether the file already exists.

-

If the file does not already exist there, it will also be created automatically and marked with a “new file” marker. If it already exists, the actual check can proceed (see point 6).

-

The developer views the automatically created file in the “validation” folder once and checks whether it meets expectations and should serve as a reference.

-

If this is the case, the marker can be removed and evaluated as the correct reference result of the test.

-

Each time the test is run again, the new file is compared with the previously approved reference file.

-

Now two different results are possible: If the files are identical, the test is considered passed; however, if the files are different, the test fails.

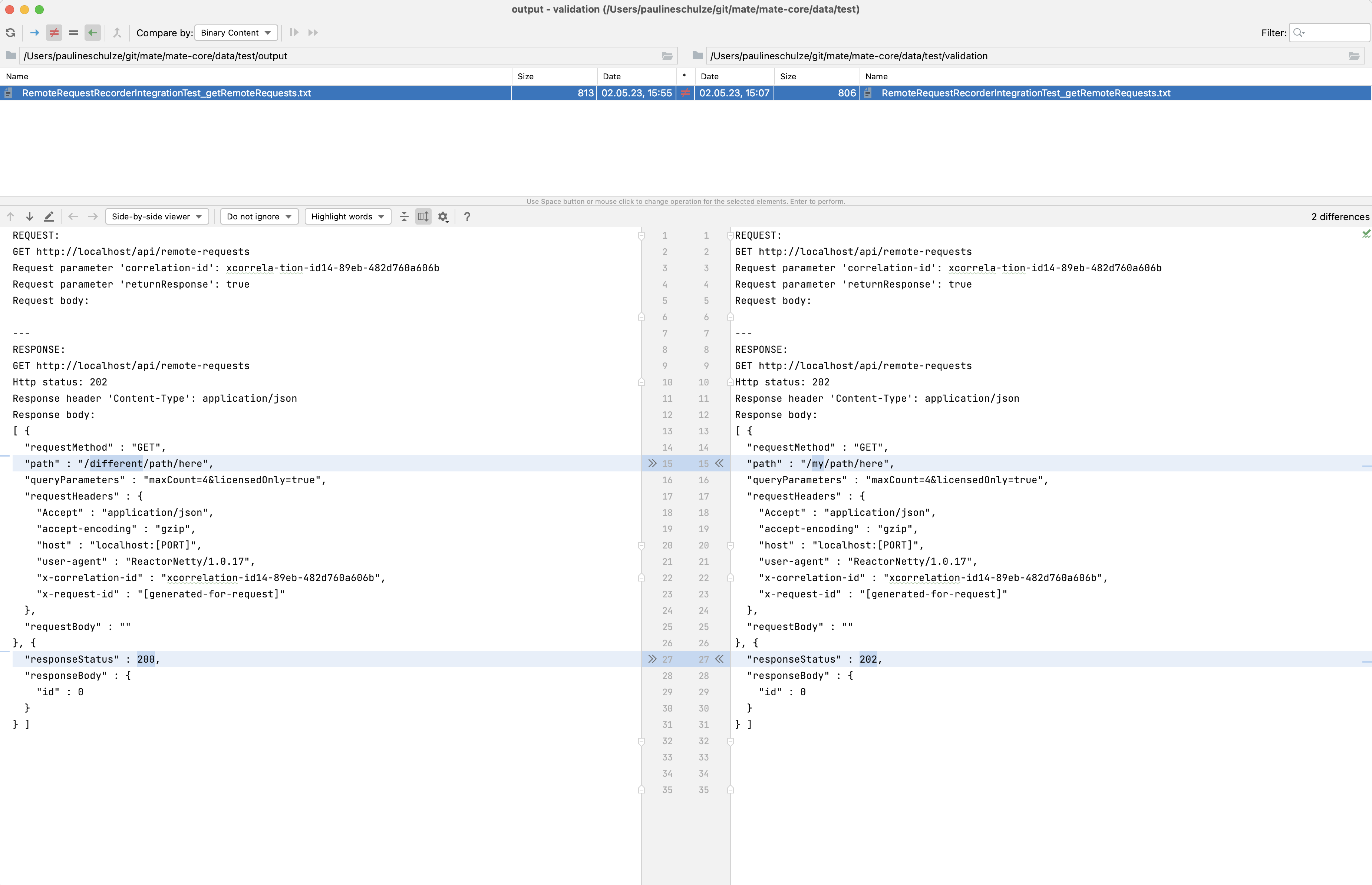

cronn’s IntelliJ Plug-in

To make it easier for developers to check validation files, cronn has developed an IntelliJ plug-in. The plug-in makes verification even easier, as it shows the differences between the validation files.

Using the example given at the beginning, we can see what a failed validation file comparison with the plug-in looks like:

What code is hidden behind the Validation File Assertions?

Let’s go back to our example from the beginning:

Behind the last line is the call to the open source project of cronn, which performs the comparison of validation files. In detail, assertRequestResponse calls up the following compareActualWithFile method:

package de.cronn.assertions.validationfile.util;

public final class FileBasedComparisonUtils {

public static void compareActualWithFile(String actualOutput, String filename, ValidationNormalizer normalizer) {

String fileNameRawFile = filename + ".raw";

writeTmp(actualOutput, fileNameRawFile);

String normalizedOutput = normalizer != null ? normalizer.normalize(actualOutput) : actualOutput;

String normalizedActual = normalizeLineEndings(normalizedOutput);

prefillIfNecessary(filename, normalizedActual);

String expected = readValidationFile(filename);

writeOutput(normalizedActual, filename);

assertEquals(expected, normalizedActual, filename);

}

}

In this case, the expected parameters are the object to be compared as a string, the file name, also as a string, and a so-called “normalizer”.

In our example, we recorded requests and responses as a string. This string will be our object of comparison. In principle, any object can be passed into the method as a string. This can be achieved, for example, with the “toString” method.

There are two ways to generate the file names: the file can be created individually on a project-by-project basis or defined automatically.

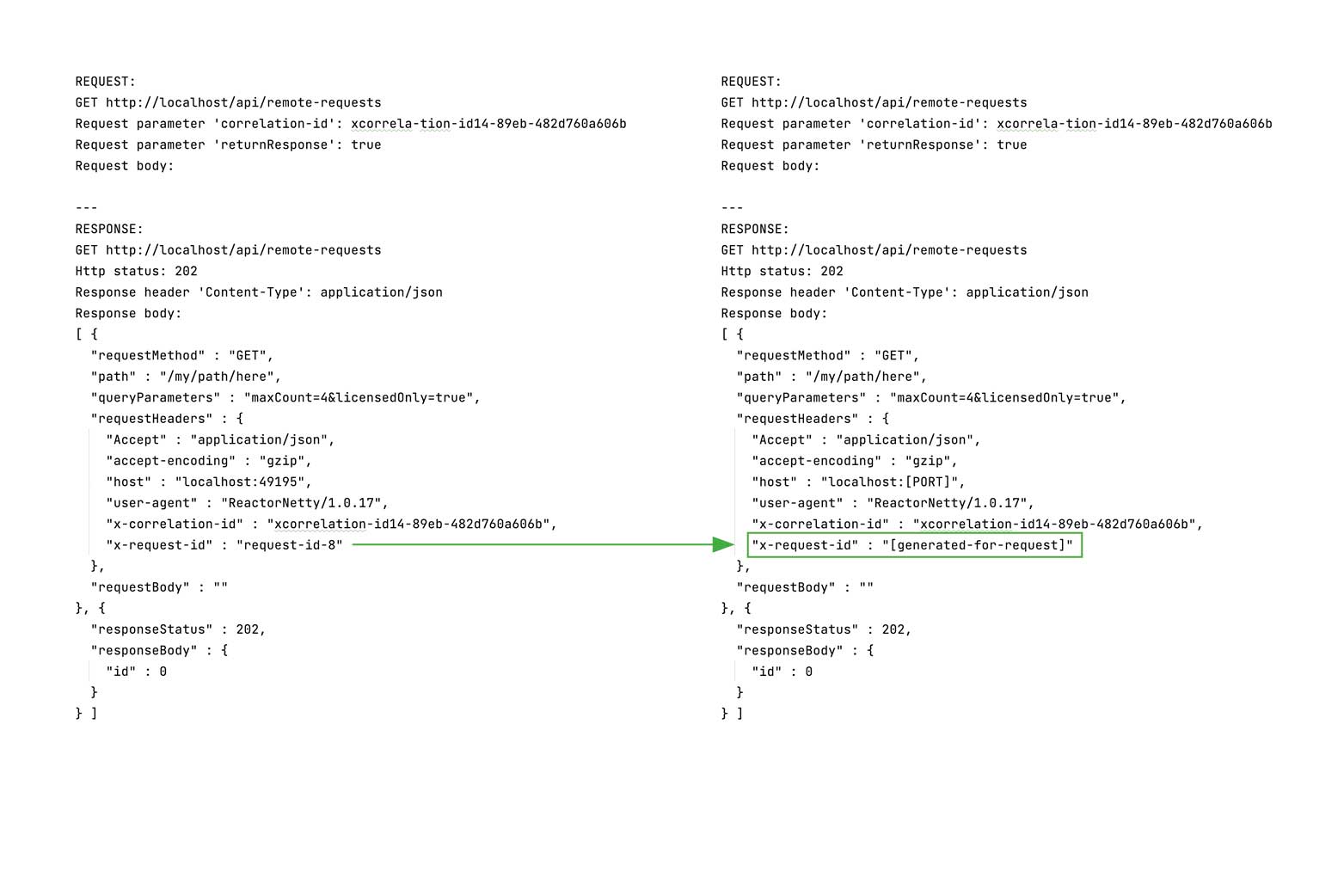

Normalizers are a central part of validation file assertions. Under certain circumstances, parts of the object to be compared may change with each test run. In our example, we compare requests. These have a unique requestId, which may differ for each test run. In such a case the validation file assertions would fail with every test execution. This is where normalizers come into play: they make it possible to mask project-specific changes and thus prevent the test from failing. Here is another example which explores this in more detail:

Concrete approach

In the compareActualWithFile method, a temp file” with the individually or automatically created file name is now generated. After that, the normalizers are used. These mask the desired variable information.

If the file does not already exist, we create it with the method prefillIfNecessary in the Validation File folder and mark it with the marker “new”. After that, we read either the newly created file or the already existing one. We save our masked file in the “output” folder and compare it with the other file from the “validation” folder.

We see in this method the exact process which was illustrated in the diagram above.

For the normalizers, our project provides the object ValidationNormalizer. In the simplest case, these look like this based on a regular expression (regex):

protected ValidationNormalizer requestIdNormalizer() {

return s ->

s.replaceAll(" " + RequestIdGenerator.PREFIX + "\\d+", " [generated-for-request]");

}

The effect of this normalizer looks like this in our example:

There are no limits to creativity when creating these normalizers – the masked elements can also be found by other approaches. However, for the sake of legibility, the simplest case is shown.

Result

Validation files allow developers to easily compare complex objects with each other. Although the verification of the file(s) still requires double-checking by the developers, the validation files cut out the complicated and, above all, lengthy process of writing individual assertions. Validation files can also be used to cover an important aspect of regression testing: a lot of information is tested alongside the target value. For example, the request structure is also checked in our example test – changes in the API would be immediately noticeable.

Not to be neglected is also the speed of validation files. Since they are based on plain text files, performance can be disregarded. Changing information must be masked, but this process can also be done quickly on a project-specific basis and using the objects provided.

The form in which validation file assertions are used in practice depends on the scope of the respective components. If the scope is small, it may still make sense to use simple assertions. Nevertheless, validation file assertions can make testing much easier – especially for complex objects – by reducing the level of complexity.